Sound waves are detected by converting vibrations into electrical signals. Concepts like wave interference and pitch impact sound experience and audio technology.

These headphones work by using a few basic physical principles. They turn electrical signals into sound through vibrations in the air. They also use magnets and coils to create sound waves that you can hear. For noise-canceling, they use a method that cancels out unwanted sounds by mixing sound waves together. These simple actions let you hear music and block out noise.

Sound waves are an integral part of our daily lives, influencing everything from how we communicate to how we enjoy media. Understanding the complex phenomena associated with sound waves can deepen our appreciation of their role and application. This article explores various aspects of sound waves, including detection, interference, pitch, localization, and the mathematical representation of these phenomena.

DTS Headphonerelies on spatial audio processing, which mimics how human ears perceive sound in a 3D space. It uses psychoacoustics, a branch of physics that studies how sound waves interact with the ear to create a sense of direction and distance. By manipulating audio signals, it simulates how sounds reflect, diffuse, and travel through an environment, making them appear to come from specific locations. This technology depends on accurately modeling sound wave behaviors, including time delays, frequency shifts, and amplitude variations. Overall, it creates a realistic and immersive audio experience by replicating natural sound phenomena.

Detecting Sound Waves:

Detecting sound waves involves capturing the vibrations in a medium (usually air) and translating them into a format that our brains can interpret. Our ears are equipped with intricate structures that detect these vibrations.

The outer ear collects sound waves, which then travel through the ear canal to the eardrum, causing it to vibrate. These vibrations are transmitted to the inner ear where they are converted into electrical signals that the brain processes as sound.

In technology, microphones perform a similar function by converting sound waves into electrical signals that can be recorded or amplified. Various types of microphones, including dynamic, condenser, and ribbon, are designed to handle different frequencies and volumes.

Wave Interference:

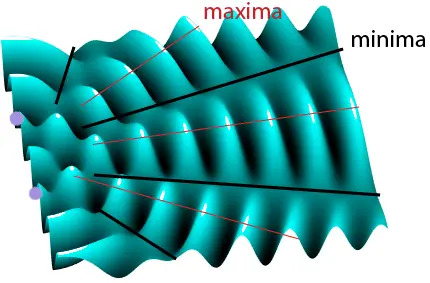

Wave interference occurs when two or more sound waves overlap and combine. This phenomenon can result in constructive interference, where waves amplify each other, or destructive interference, where waves cancel each other out. The result of interference is crucial in various applications, such as acoustics in concert halls, noise-canceling headphones, and even in the design of audio systems.

In a practical setting, interference is managed to optimize sound quality. For instance, sound engineers use techniques to minimize destructive interference and enhance the listening experience in different environments.

Sound Waves and Pitch:

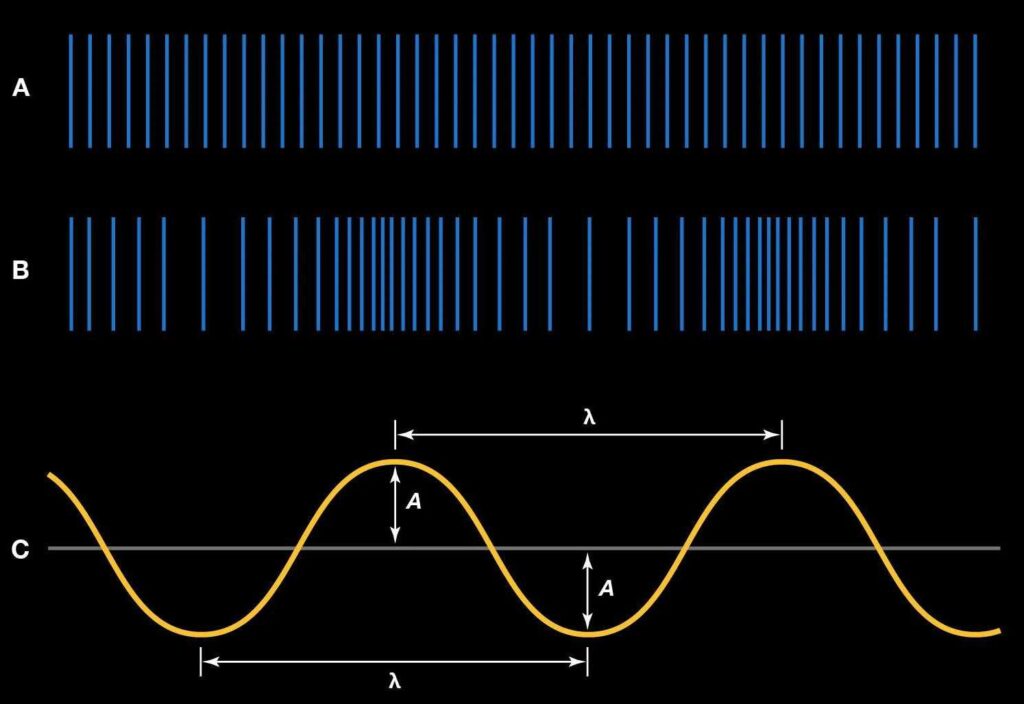

Pitch is the perceptual quality of sound that allows us to determine how high or low a sound is. It is closely related to the frequency of the sound waves—higher frequencies correspond to higher pitches, while lower frequencies correspond to lower pitches. The human ear can typically detect frequencies ranging from 20 Hz to 20 kHz.

In musical terms, pitch determines the notes we hear and is fundamental to the creation of melodies and harmonies. Musical instruments are designed to produce specific pitches by varying the length, tension, and mass of their vibrating elements.

Neurocognitive Development: Normative Development

Neurocognitive development refers to how the brain develops and processes information related to sound. From infancy, humans are exposed to a range of sounds that contribute to cognitive and perceptual development.

Babies start recognizing their mother’s voice and other familiar sounds early in life, which plays a critical role in language acquisition and social interaction.

Normative development in this context involves the typical progression of auditory skills, such as sound localization and language development. These skills are crucial for effective communication and cognitive growth throughout life.

Also read: Is Dts Headphone x Good for Gaming – A Comprehensive Guide!

Sound Source Localization:

Sound source localization is the ability to determine the origin of a sound. This skill relies on various cues, including the time delay between when a sound reaches each ear and the difference in sound intensity. Our brains use these cues to pinpoint the direction and distance of a sound source.

In technology, sound source localization is applied in various fields, from improving the accuracy of hearing aids to enhancing the performance of surveillance systems and virtual reality environments.

Sound Interference:

Sound interference, as mentioned earlier, can have both constructive and destructive effects. It is a key consideration in fields like acoustics and audio engineering. For example, in concert halls, careful design and placement of sound-absorbing materials help manage interference and improve sound clarity.

In everyday life, unwanted sound interference, such as echoes or noise pollution, can be mitigated using soundproofing materials and noise-canceling technologies.

Television and Radio Signals:

Television and radio signals are transmitted through electromagnetic waves, not sound waves. However, understanding sound waves is crucial in the context of audio signals transmitted via these media.

For instance, the quality of audio transmission in television and radio broadcasts depends on how well sound waves are captured, transmitted, and reproduced. Advancements in technology have improved the clarity and fidelity of audio in broadcasting, enhancing the overall media experience for consumers.

Mathematical Representation of Interference:

The mathematical representation of wave interference involves complex equations and models. The principle of superposition, which states that the resultant wave is the sum of the individual waves, is fundamental in understanding interference patterns.

Mathematical tools, such as Fourier analysis, are used to analyze and predict how sound waves will interact. These models help in designing audio systems, solving acoustical problems, and optimizing sound quality.

Practical Implications of Superposition:

The principle of superposition has practical implications in various fields. In acoustics, it helps engineers design spaces with optimal sound quality. In signal processing, it allows for the effective mixing of multiple audio signals. In everyday life, understanding superposition helps in addressing issues like sound interference and optimizing audio equipment.

FAQ’s:

1. What is the main function of sound wave detection?

Sound wave detection involves converting vibrations in a medium into signals that our brains interpret as sound.

2. How does wave interference affect sound?

Wave interference can cause sound waves to amplify each other (constructive interference) or cancel each other out (destructive interference).

3. What determines the pitch of a sound?

The pitch of a sound is determined by the frequency of the sound waves; higher frequencies result in higher pitches, and lower frequencies result in lower pitches.

4. How does sound source localization work?

Sound source localization relies on cues like time delays and intensity differences between ears to determine the direction and distance of a sound source.

5. What is the principle of superposition in sound waves?

The principle of superposition states that the resultant wave is the sum of individual waves, which helps analyze and manage wave interference.

Conclusion:

Sound waves are a fascinating and multifaceted topic, from their detection and interference to their mathematical representation. Understanding these concepts enhances our appreciation of the role sound plays in our lives, from communication and entertainment to technological applications. Whether you are a student, a professional in acoustics, or simply an enthusiast, a deeper grasp of sound waves can enrich your knowledge and improve your practical interactions with sound.